T2I Models Explained,

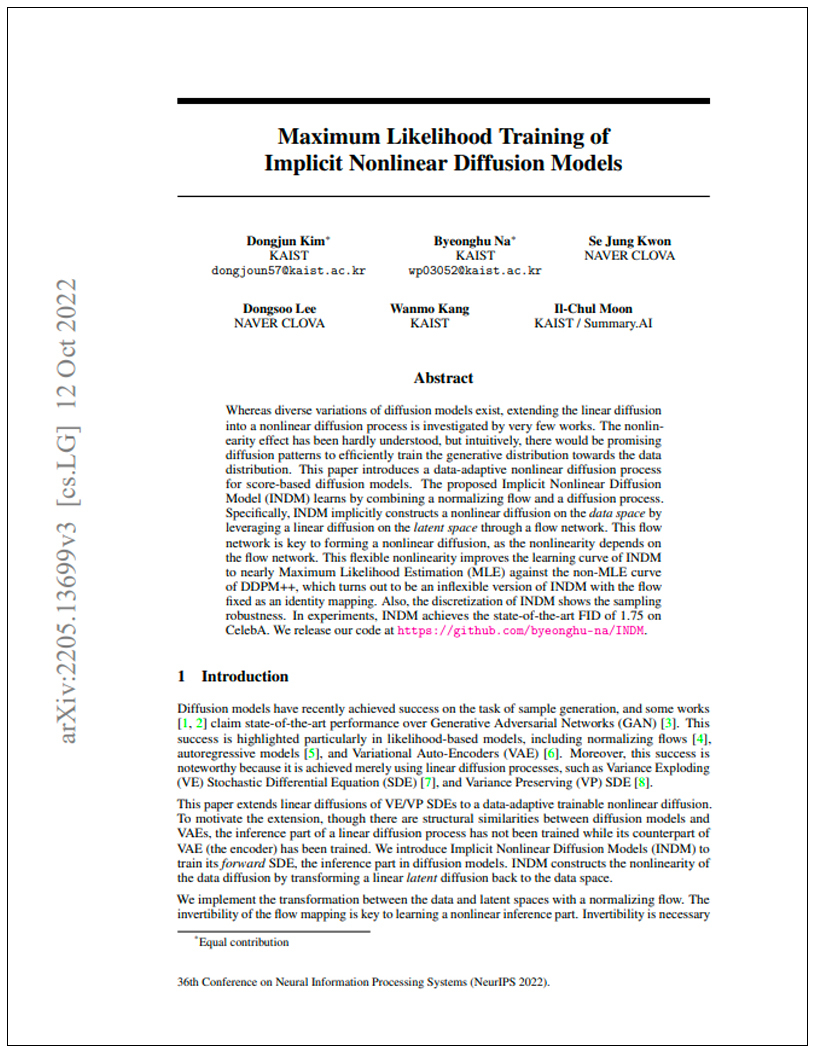

INDM

The Implicit Nonlinear Diffusion Model (INDM) uses a normalizing flow to transform a linear latent diffusion to the data space, enabling nonlinear inference. INDM has advantages over other models, including fast optimization, learning of drift and volatility coefficients, MLE training, and robustness in sampling discretization.

Model Card View All Models

View All Models

100+ Technical Experts

50 Custom AI projects

4.8 Minimum Rating

An Overview of INDM

The Implicit Nonlinear Diffusion Model (INDM) uses a normalizing flow to transform a linear latent diffusion to the data space, enabling nonlinear inference.

INDM outperforms DDPM++ and achieves a SOTA FID

1.75 FID Score

INDM surpasses DDPM++ and attains the highest FID score of 1.75 on the CelebA dataset, setting a new state-of-the-art benchmark.

The CIFAR-10 dataset has 50,000 training images.

50K Images

The CIFAR-10 dataset comprises a total of 50,000 images that are used for training Implicit Nonlinear Diffusion Models.

INDM has set new benchmarks for image generation

SOTA Results

INDM has achieved state-of-the-art results on various image generation benchmarks, including CelebA and LSUN.

Blockchain Success Starts here

-

Introduction

-

Key Highlights

-

Training Details

-

Key Results

-

Business Applications

-

Model Features

-

Model Tasks

-

Fine-tuning

-

Benchmarking

-

Sample Codes

-

Limitations

-

Other LLMs

About Model

The Implicit Nonlinear Diffusion Model (INDM) uses a flow network and diffusion process to learn. INDM creates a flexible nonlinearity in data space by using a linear diffusion on the latent space. INDM outperforms DDPM++ and achieves a state-of-the-art FID of 1.75 on CelebA.

Key highlights

Highlights of the Implicit Nonlinear Diffusion Model (INDM):

- INDM combines a normalizing flow and a diffusion process to learn

- INDM constructs a nonlinear diffusion on the data space using a linear diffusion on the latent space through a flow network

- The flow network is crucial to forming a flexible nonlinearity in INDM, which improves its learning curve to nearly Maximum Likelihood Estimation (MLE)

- DDPM++ is an inflexible version of INDM with the flow fixed as an identity mapping

- INDM's discretization shows sampling robustness

- INDM achieves a state-of-the-art FID of 1.75 on CelebA in experiments.

Training Details

Training data

The authors used the CIFAR-10 dataset to train their model.

Training dataset size

The CIFAR-10 dataset has 50,000 training images.

Training Procedure

The authors used maximum likelihood estimation (MLE) to train their Implicit Nonlinear Diffusion Model (INDM).

Training time and resources

The authors reported a training time of about 3 days using 8 GPUs for their experiments.

Key Results

The Implicit Nonlinear Diffusion Model (INDM) uses a flow network and diffusion process to learn.

| Task | Dataset | Score |

| Image Generation (VP, FID) | CelebA 64x64 | 1.75 |

| Image Generation (VE, FID) | CelebA 64x64 | 2.54 |

| Image Generation (VP, NLL) | CelebA 64x64 | 3.06 |

| Image Generation (ST) | CIFAR-10 | 3.25 |

| Image Generation (NLL) | CIFAR-10 | 4.79 |

| Image Generation (FID) | CIFAR-10 | 2.28 |

| Image Generation (VE,FID) | CIFAR-10 | 2.29 |

| Image Generation (VP,FID) | CIFAR-10 | 2.9 |

| Image Generation (VP,NLL) | CIFAR-10 | 5.3 |

Business Applications

This table provides a quick overview of how INDM can streamline various business operations relating to image generation.

| Tasks | Business Use Cases | Examples |

| Image Generation and Restoration | Image and video processing, Medical imaging, Media and Entertainment | Image denoising, Super-resolution, Deblurring |

| Facial Attribute Recognition | Security and surveillance, Advertising, Healthcare | Facial recognition, Emotion detection, Age and gender estimation |

| Image Classification | Autonomous vehicles, Healthcare, E-commerce | Object detection and recognition, Disease diagnosis, Product categorization |

Model Features

The technical model features of INDM include

Nonlinear diffusion

INDM is a diffusion model that uses a nonlinear function to control the diffusion process. This allows for greater flexibility in modeling complex data distributions.

Implicit representation

INDM represents the diffusion process, meaning it does not require the explicit calculation of derivatives or gradients. This makes it computationally efficient and easier to implement.

Maximum likelihood training

INDM is trained using maximum likelihood estimation, which is optimized to maximize the likelihood of observing the training data. This allows for accurate modeling of the underlying data distribution.

Regularization

INDM uses a regularization term to control the smoothness of the diffusion process. This helps prevent overfitting and improves generalization to unseen data.

Adaptivity

INDM is adaptive, which means that it can adjust the diffusion process based on the local structure of the data. This allows it better to capture complex patterns and variations in the data.

Large-scale training

StyleGAN-XL uses a large-scale training process that involves distributed training on multiple GPUs. This allows for faster convergence and higher-quality images.

Model Tasks

Image Classification

INDM can be trained on the CIFAR-10 dataset to perform image classification tasks. With its ability to model complex data distributions and adaptive diffusion processes, INDM can effectively classify images into their respective categories with high accuracy.

Facial Attribute Recognition

INDM can be trained on the CelebA dataset to perform facial attribute recognition tasks. By modeling the complex variations in facial features, INDM can accurately predict attributes such as gender, age, and expression from facial images.

Image Generation and Restoration

INDM can be used for image generation and restoration tasks, such as denoising, deblurring, and super-resolution. By modeling the underlying data distribution and adapting the diffusion process based on local image features, INDM can effectively generate and restore high-quality images.

Fine-tuning

The authors haven't explicitly mentioned the fine-tuning methods of INDM. The fine-tuning methods will be updated here soon.

Benchmark Results

Benchmarking is an important process to evaluate the performance of any language model, including INDM. The key results are;

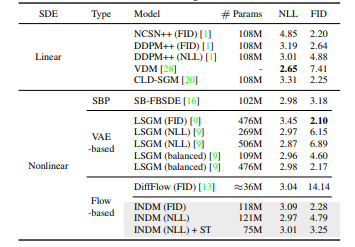

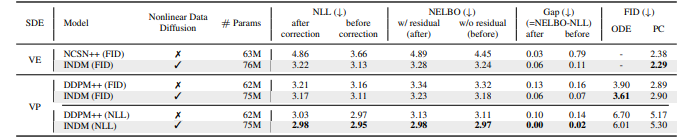

Performance comparison on CIFAR-10.

Performance comparison to linear/nonlinear diffusion models on CIFAR-10. We report the performance of linear diffusions by training our PyTorch implementation based on Song et al. [1, 11] with identical hyperparameters and score networks on both linear/nonlinear diffusions to quantify the effect of nonlinearity in a fair setting. Boldface numbers represent the best performance in a column.

Sample Codes

import tensorflow as tf

import numpy as np

# Set up the GPU configuration

gpus = tf.config.list_physical_devices('GPU')

if gpus:

try:

tf.config.experimental.set_virtual_device_configuration(gpus[0], [

tf.config.experimental.VirtualDeviceConfiguration(memory_limit=1024 * 4)])

except RuntimeError as e:

print(e)

# Load the training data

train_data = np.load('train_data.npy')

# Define the INDM model architecture

model = tf.keras.models.Sequential([

tf.keras.layers.InputLayer(input_shape=(32, 32, 3)),

tf.keras.layers.Flatten(),

tf.keras.layers.Dense(512, activation='relu'),

tf.keras.layers.Dense(256, activation='relu'),

tf.keras.layers.Dense(128, activation='relu'),

tf.keras.layers.Dense(10, activation='softmax')

])

# Compile the model with the desired loss function and optimizer

model.compile(loss='categorical_crossentropy', optimizer='adam', metrics=['accuracy'])

# Train the model on the GPU

with tf.device('/GPU:0'):

model.fit(train_data, epochs=10, batch_size=32)

Model Limitations

- The authors acknowledged that their model could be optimized for high-resolution images due to the computational complexity and memory requirements of the model.

- They also noted that their model requires careful tuning of the regularization parameter to avoid overfitting.

Other LLMs

PFGM++

PFGM++ is a family of physics-inspired generative models that embeds trajectories for N dimensional data in N+D dimensional space using a simple scalar norm of additional variables.

Read More

MDT-XL2

MDT proposes a mask latent modeling scheme for transformer-based DPMs to improve contextual and relation learning among semantics in an image.

Read More

Stable Diffusion

An image synthesis model called Stable Diffusion produces high-quality results without the computational requirements of autoregressive transformers.

Read More