LLMs Explained,

Big Bench

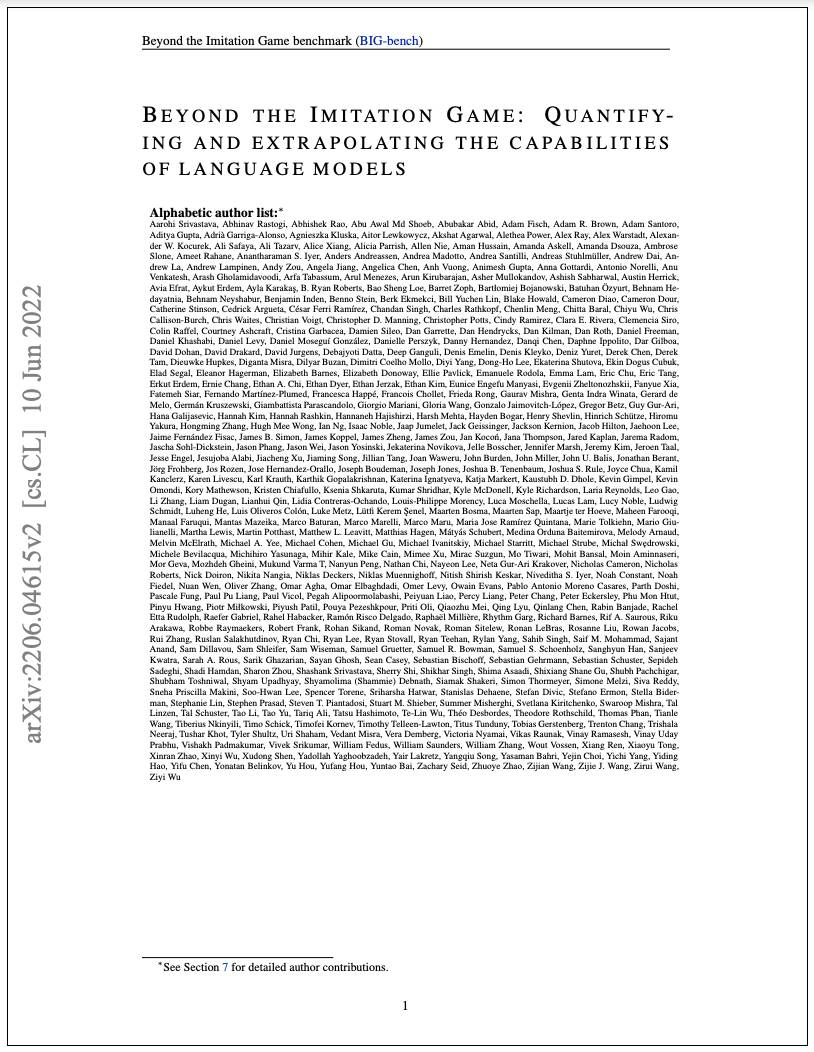

The Big Bench model is a benchmarking tool developed to evaluate the performance of large-scale language models (LSLMs) on a range of natural language processing (NLP) tasks. A team of researchers introduced the Big Bench at the University of California, Berkeley, in 2021. The paper introduces Big bench, a benchmark that assesses the capabilities and limitations of large-scale language models across a wide range of diverse and complex tasks. BIG-bench includes 204 tasks across different domains, including math, physics, linguistics, and social bias. The benchmark evaluates various language models, including OpenAI’s GPT models, Google-internal dense transformer architectures, and Switch-style sparse transformers.

Model Card

100+ Technical Experts

50 Custom AI projects

4.8 Minimum Rating

An Overview of BIG-Bench

Big-Bench benchmark tool measures model performance using a metric called Task-Tuned Score (TTS), which is computed based on the model's accuracy on specific tasks. The Big Bench is a significant advancement in evaluating LSLMs, providing a standardized and comprehensive evaluation of their capabilities across a wide range of NLP tasks.

Model have large-scale dataset of 15 terabytes

15 terrabytes dataset

The Big-Bench benchmark uses a large-scale dataset consisting of over 15 terabytes of text data from various sources, including common crawl and scientific papers to answer questions related to various fields of study.

Consists of 204 tasks by various authors

444 author contribution

BIG-bench currently consists of 204 tasks contributed by 444 authors across 132 institutions, which include drawing problems from linguistics, math, common-sense reasoning, social bias, and beyond.

Handles extremely diverse and difficult tasks

200 diverse tasks

The original paper's authors announced BIG-bench, a comprehensive benchmark to evaluate the performance of language models on over 200 challenging and diverse tasks.

Blockchain Success Starts here

-

Introduction

-

Business Applications

-

Model Features

-

Model Tasks

-

Getting Started

-

Fine-tuning

-

Benchmarking

-

Sample Codes

-

Limitations

-

Other LLMs

Introduction to BIG-Bench

Language models have succeeded remarkably in various natural language processing (NLP) tasks. However, their capabilities and limitations still need to be fully understood. To address this gap, the authors introduce BIG-bench, which evaluates the performance of language models on tasks that are believed to be beyond their current capabilities. The benchmark aims to inform future research, prepare for disruptive new model capabilities, and reduce the potential for socially harmful effects. The Big Bench consists of 200 diverse tasks that cover a range of NLP applications, including text generation, summarization, translation, and question-answering. Each task is carefully designed to represent a specific NLP task or challenge and requires different skills from the evaluated LSLM.

About Model

BIG-bench comprises 204 tasks written by 444 authors from 132 institutions. The tasks cover various subjects, including math, physics, linguistics, social bias, and others. A team of human expert raters completed all tasks to provide a solid baseline. The benchmark assesses the performance of various language models, such as OpenAI's GPT models, Google-internal dense transformer architectures, and Switch-style sparse transformers, across various model sizes ranging from millions to hundreds of billions of parameters. The authors also looked at how model sparsity affected task performance. The results indicate that model performance and calibration improve with model size, but performance still needs improvement compared to human expert raters. Furthermore, in ambiguous contexts, social bias typically increases with scale, but it can be improved with prompting. The authors conclude that BIG-bench provides a valuable resource for characterizing the capabilities and limitations of language models and for enabling the development of more sophisticated and effective language-based applications.

Model Type: Big bench is not a specific model, rather it is benchmark tool

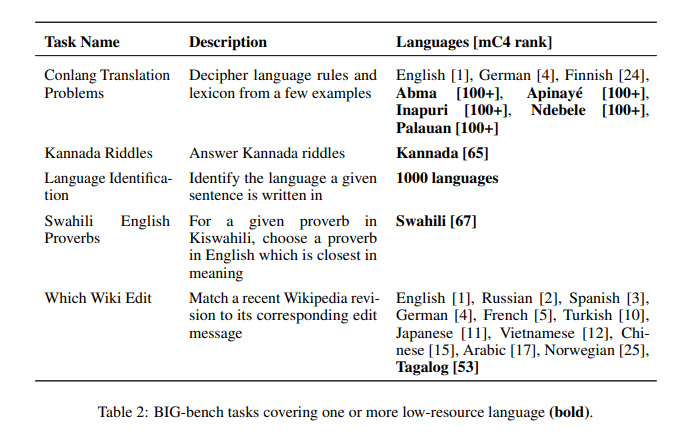

Language(s) (NLP): English, German, Finnish, Abma, Apinayé, Inapuri, Ndebele, Palauan

License: Apache 2.0

Model highlights

Following are the key highlights of the Big-Bench model.

- BIG-Bench is a benchmarking tool to evaluate language models' present and near-future capabilities and limitations.

- BIG-bench evaluates the behavior of different model classes, including OpenAI's GPT models, Google-internal dense transformer architectures, and Switch-style sparse transformers.

- Model performance and calibration improve with scale but are poor in absolute terms and when compared with rater performance.

- Performance is remarkably similar across model classes, though with benefits from sparsity.

- Tasks that exhibit "breakthrough" behavior at a critical scale often involve multiple steps, components, or brittle metrics.

- Social bias typically increases with scale in settings with ambiguous contexts, but this can be improved with prompting.

Training Details

Training Data

The research paper on the Big Bench benchmark does not provide details about the training data used for each task. Instead, the paper focuses on evaluating the performance of different language models on the 204 tasks included in the benchmark.

Training Procedure

The training procedure for the Big Bench benchmark is not provided in the research paper, as it is not a training dataset but rather a benchmarking tool for evaluating the performance of pre-trained language models.

Training dataset size

The models evaluated on the benchmark were pre-trained on large corpora of text, but the generic sizes of the training datasets used for each model is a large-scale dataset consisting of over 15 terabytes of text data

Training time and resources

The training time and resources for the Big Bench benchmark are irrelevant, as the benchmark is a tool for evaluating the performance of pre-trained language models on a diverse set of natural language processing tasks rather than a dataset that requires training.

Model Types

The Big Bench benchmark does not propose a specific language model architecture or variants but rather evaluates the performance of several pre-existing language model architectures on a diverse set of natural language processing tasks. The evaluated architectures include variants of transformer-based models such as GPT-2 and GPT-3 from OpenAI, T5 from Google, Megatron-LM from NVIDIA, and ProphetNet from Microsoft. These models are based on deep neural network architectures that utilize self-attention mechanisms to process input sequences and have been pre-trained on large amounts of text data using unsupervised learning techniques. The paper evaluates the performance of these models on a range of tasks, providing insights into their strengths and weaknesses and informing future research directions in natural language processing.

Business Applications

Big-Bench shows the best results for tasks- Language Modeling and Intent recognition. You can use this model for building business applications for use cases like;

| Language Modeling | Intent recognition: |

| Text completion and prediction | Customer service chatbots |

| Sentiment analysis | Voice assistants |

| Text classification | Sales and marketing automation |

| Language translation | Fraud detection and prevention |

| Content generation and summarization | Customer feedback analysis |

| Speech recognition and transcription | Market research and customer profiling |

| Personalization and recommendation systems | Health and wellness coaching |

| Information retrieval and search engines | Educational and training chatbots |

| Fraud detection and spam filtering. | E-commerce product recommendations. |

Model Features

The model incorporates innovative techniques that make it more effective and scalable than conventional models. Here are some of the core features of BIG-Bench.

Diverse NLP Tasks

The Big Bench benchmark consists of 204 natural language processing tasks covering various domains and topics. These tasks are contributed by 444 authors across 132 institutions and are believed to be beyond the capabilities of current language models

Model Evaluation

The benchmark evaluates the behavior of various transformer-based models, including OpenAI's GPT models, Google-internal dense transformer architectures, and Switch-style sparse transformers. Model sizes range from millions to hundreds of billions of parameters.

Human Expert Raters

A team of human expert raters performed all tasks in the benchmark to provide a strong baseline. This allows for a comparison between model performance and human performance and identifies areas where models need improvement.

Informing Future Research

The benchmark findings inform future research directions in natural language processing. For example, improving model calibration and reducing social bias are two areas where more research is needed. The benchmark also helps prepare for disruptive new model capabilities and ameliorate socially harmful effects.

Licensing

The Big Bench benchmark is an open-source project, and the authors and contributors hold the license. Researchers led the project from the University of California, Berkeley, but the benchmark was contributed to by hundreds of authors across multiple institutions.

Level of customization

The Big Bench benchmark is designed to be a standardized benchmark for evaluating the capabilities of various natural language processing models. The benchmark is not designed for customization or adaptation to specific use cases. However, the benchmark can be used to compare the performance of different models on a wide range of natural language processing tasks. If a specific use case requires customized natural language processing capabilities, additional training on specific datasets or fine-tuning pre-trained models may be necessary.

Available pre-trained model checkpoints

Big-Bench provides several pre-trained model checkpoints for language models, which can be used as a starting point for further fine-tuning or downstream tasks. These pre-trained models vary in size and architecture, and some are fine-tuned for specific downstream tasks. These pre-trained models can help researchers and practitioners save time and computational resources by starting with a pre-trained model instead of training from scratch.

Model Tasks

Linguistics Tasks

These tasks involve testing the model's understanding of the structure and rules of language, including tasks such as identifying parts of speech, generating grammatically correct sentences, and correcting grammatical errors.

Reasoning Tasks

These tasks test the model's ability to reason about language, including tasks such as answering questions about a passage of text, predicting the output of a math equation, and identifying the source of a sentence.

Diversity

The Big Bench benchmark covers various natural language processing tasks spanning various domains and topics. The benchmark currently consists of 204 tasks, contributed by 444 authors across 132 institutions.

Translation

These tasks involve testing the model's ability to translate between different languages, including translating a sentence from English to Farsi or vice versa.

Text Generation

These tasks test the model's ability to generate coherent and meaningful text, including generating a short story with a given theme or completing a sentence with a missing word.

Getting Started

Clone the BIG-bench repository from GitHub using the following command:

git clone https://github.com/google/BIG-bench.git

Install the required dependencies by running the following command:

pip install -r requirements.txt

Download the pre-trained language model checkpoints and place them in the checkpoints directory. You can download the checkpoints from the BIG-bench GitHub releases page.

To run the BIG-bench tasks, use the following command:

python -m tasks.task_{task_name}

where {task_name} is the name of the task you want to run. For example, to run the arithmetic task, use the following command:

python -m tasks.task_arithmetic

To run the BIG-bench evaluation, use the following command:

css

python evaluate.py --tasks {task_name_1} {task_name_2} ... --models {model_name_1} {model_name_2} ... --sizes {model_size_1} {model_size_2} ...

where {task_name_i} is the name of the i-th task, {model_name_i} is the name of the i-th model, and {model_size_i} is the size of the i-th model. For example, to evaluate the arithmetic task using the gshard-base and switch-transformer models of size 10B and 1T, use the following command:

css

python evaluate.py --tasks arithmetic --models gshard-base switch-transformer --sizes 10B 1T

Note that the evaluation script requires a large amount of memory and may take several hours to run.

Fine-tuning

Here are a few fine-tuning techniques that can be used for BIG-bench:

Customizing the training data

The training data can be customized to focus on specific domains or tasks of interest. This can help improve the model's performance on those tasks.

Adjusting the hyperparameters

Fine-tuning the hyperparameters of the model, such as the learning rate, batch size, and number of training epochs, can improve its performance on specific tasks.

Transfer learning

Pre-trained models can be fine-tuned on new tasks by adding a few task-specific layers and retraining the model on the new data. This can help improve the model's performance on the new tasks with less training data

Ensembling

Combining the predictions of multiple models trained on different data or with different architectures can improve the overall performance of the model.

Benchmarking

Table 1 shows BIG-bench tasks covering one or more low-resource language

Table 2 shows BIG-G sparse model sizes and parameters.

| Non-Emb. Params | FLOP eq. | nlayers | dmodel | df | nheads | nkv | nmoe | nexperts |

| 51M | 3M | 1 | 256 | 2048 | 4 | 128 | 1 | 32 |

| 212M | 18M | 2 | 512 | 4096 | 8 | 128 | 1 | 32 |

| 495M | 60M | 3 | 768 | 6144 | 12 | 128 | 1 | 32 |

| 1.7B | 147M | 4 | 1024 | 8192 | 16 | 128 | 2 | 32 |

| 2.7B | 282M | 5 | 1280 | 10240 | 20 | 128 | 2 | 32 |

| 3.9B | 481M | 6 | 1536 | 12288 | 24 | 128 | 2 | 32 |

| 7.3B | 1.1B | 8 | 2048 | 16384 | 32 | 128 | 2 | 32 |

| 11.8B | 2.2B | 10 | 2560 | 20480 | 40 | 128 | 3 | 32 |

| 24.7B | 3.8B | 12 | 3072 | 24576 | 48 | 128 | 3 | 32 |

| 46.0B | 8.9B | 16 | 4096 | 32768 | 64 | 128 | 4 | 32 |

Sample Code 1

Running the model on a CPU

import torch import transformers model_name = 'google/bigbird-roberta-base' tokenizer = transformers.AutoTokenizer.from_pretrained(model_name) model = transformers.AutoModelForMaskedLM.from_pretrained(model_name) # Sample input sentence input_sentence = "The quick brown fox jumps over the [MASK]." # Tokenize the input sentence tokens = tokenizer(input_sentence, return_tensors='pt') # Generate predictions using the model outputs = model(**tokens) # Extract the predicted tokens from the output predicted_tokens = torch.argmax(outputs.logits, dim=-1)[0] # Convert the predicted tokens back to strings predicted_words = tokenizer.batch_decode(predicted_tokens) print(predicted_words)

Sample Code 2

Running the model on a GPU

import torch

from transformers import AutoTokenizer, AutoModel

# Load the pre-trained model and tokenizer

model_name = "google/bigbird-roberta-base"

tokenizer = AutoTokenizer.from_pretrained(model_name)

model = AutoModel.from_pretrained(model_name)

# Set device to GPU

device = torch.device("cuda")

# Move model to GPU

model.to(device)

# Sample input

input_text = "Hello, how are you doing today?"

# Tokenize input

input_ids = tokenizer.encode(input_text, return_tensors="pt").to(device)

# Pass input through the model

outputs = model(input_ids)

# Print output

print(outputs)

Limitations

While the BIG-bench project provides valuable insights into the capabilities and limitations of state-of-the-art language models, there are still some limitations to be aware of:

Limited set of tasks

While BIG-bench includes a diverse set of 204 tasks, it is still a limited set and may not cover all possible use cases or scenarios.

Pretrained models may not be sufficient

The pretrained models provided with BIG-bench may not be sufficient for all tasks and may require further fine-tuning or customization to achieve optimal performance.

Computationally intensive

The large-scale language models used in BIG-bench are computationally intensive and require significant resources to train and run, which may be a limitation for some organizations or individuals.

Limited interpretability

While BIG-bench provides insights into the capabilities of language models, it can be difficult to interpret how and why the models make certain predictions or decisions, which may be a limitation in some use cases where interpretability is important.